|

OpenAI has just launched GPT-OSS, the company's first open-weighted AI model since 2018. A key feature is that the model is released for free; users can download, customize, and deploy it on a regular computer. (Image: OpenAI ) |

|

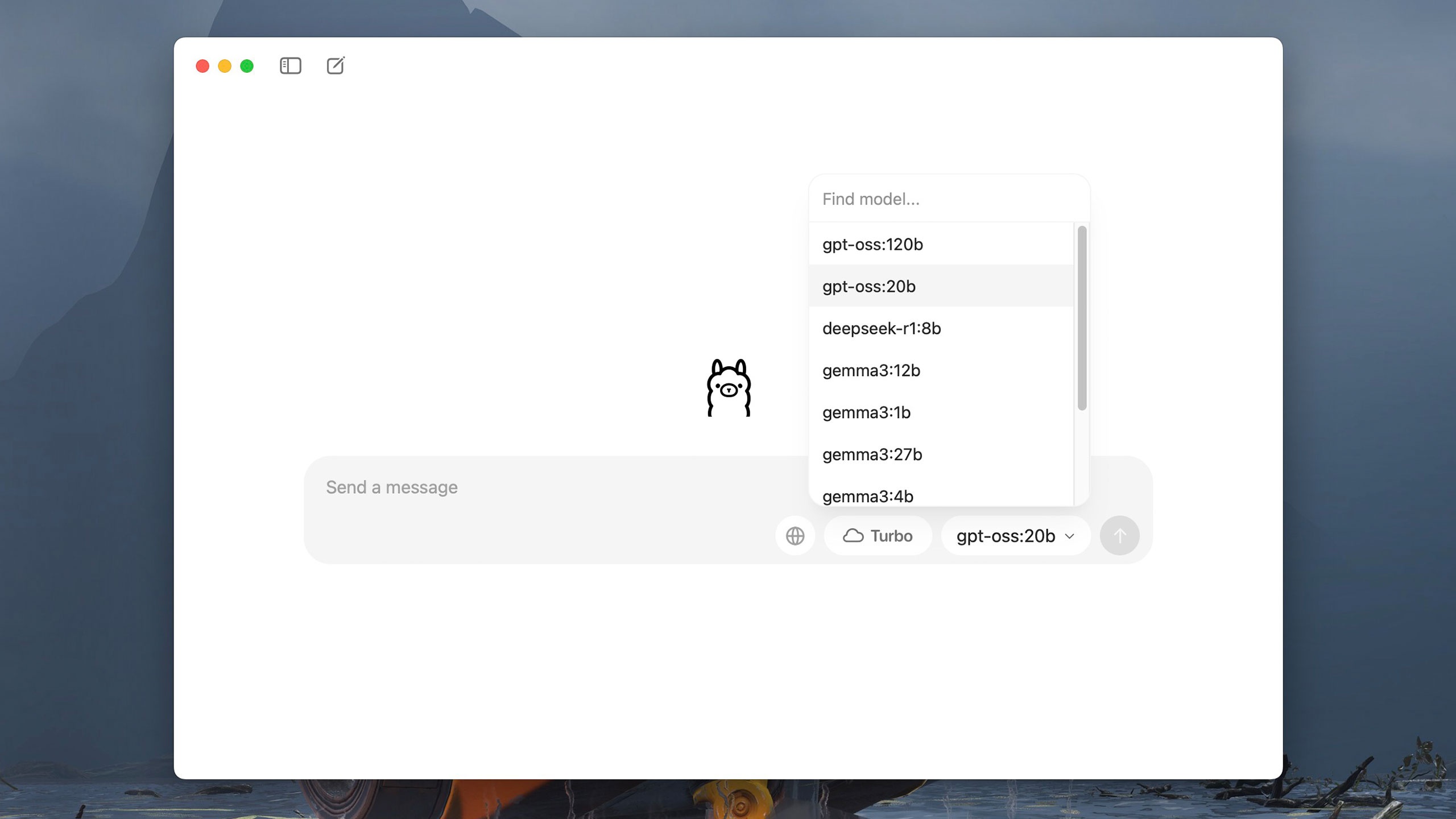

GPT-OSS comes in two versions: the 20 billion parameter version (GPT-OSS-20b) can run on computers with a minimum of 16 GB of RAM, while the 120 billion parameter version (GPT-OSS-120b) can run on an Nvidia GPU with 80 GB of memory. According to OpenAI, the 120 billion parameter version is equivalent to o4-mini, while the 20 billion parameter version operates similarly to the o3-mini model. |

|

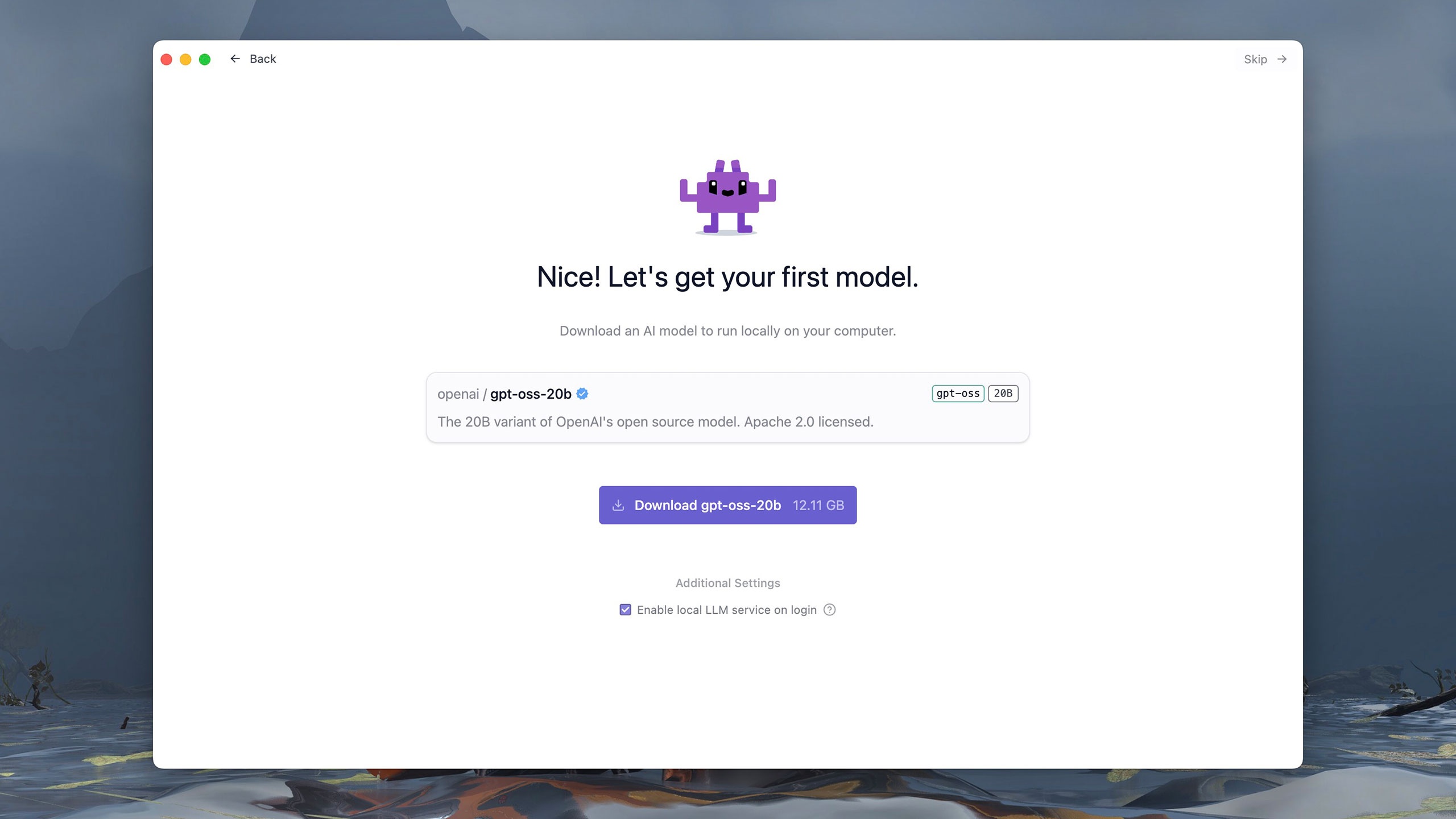

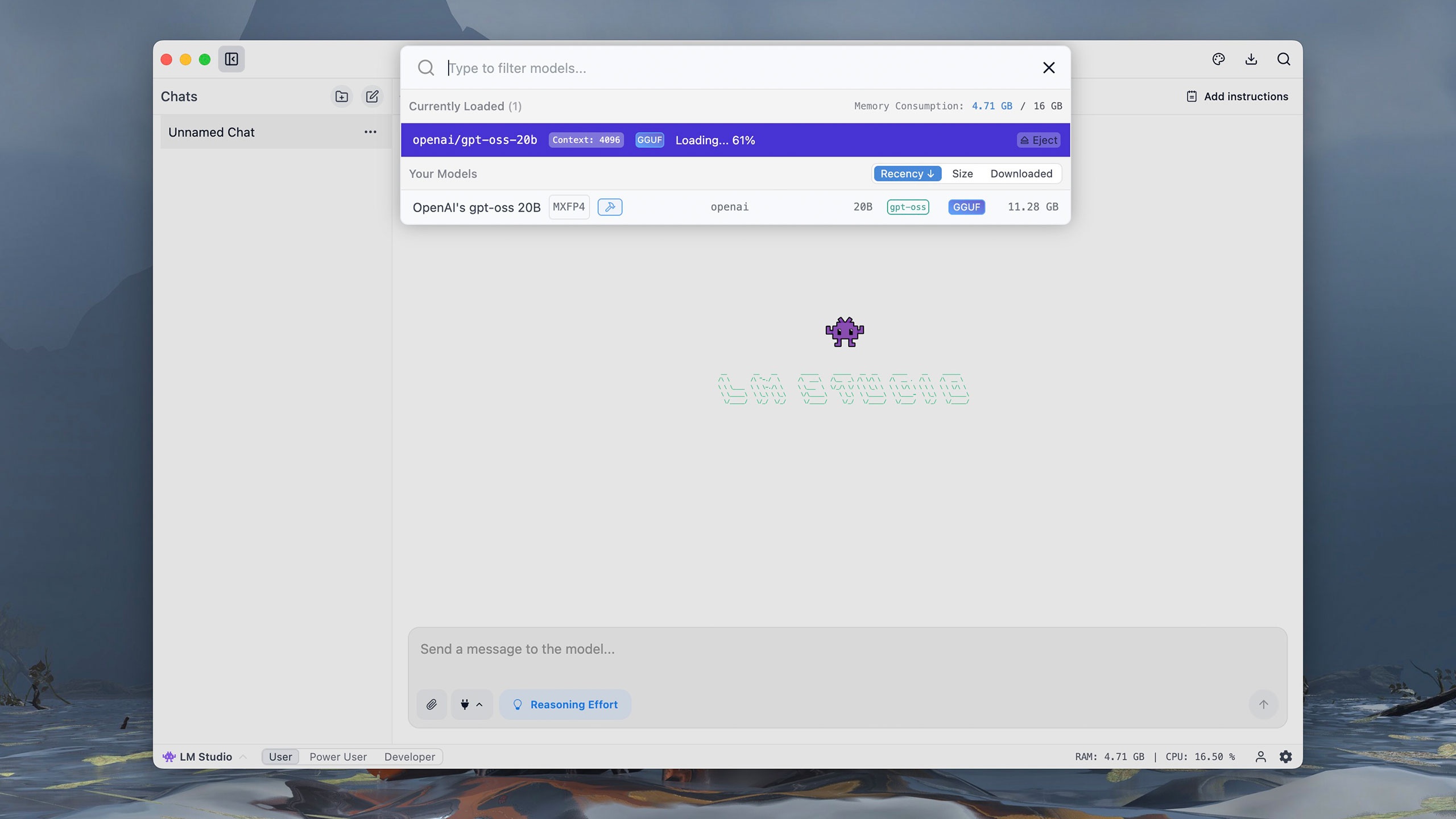

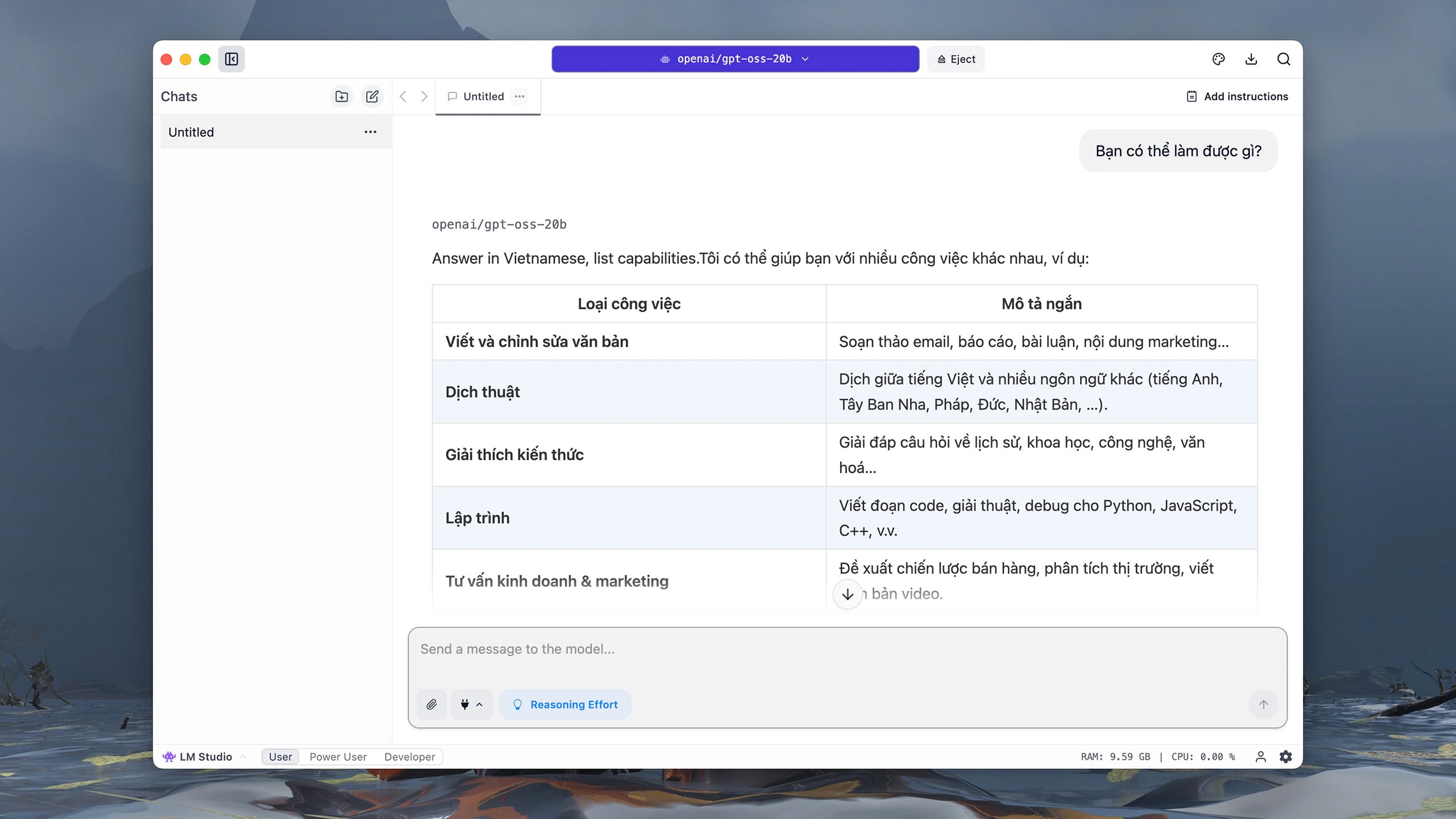

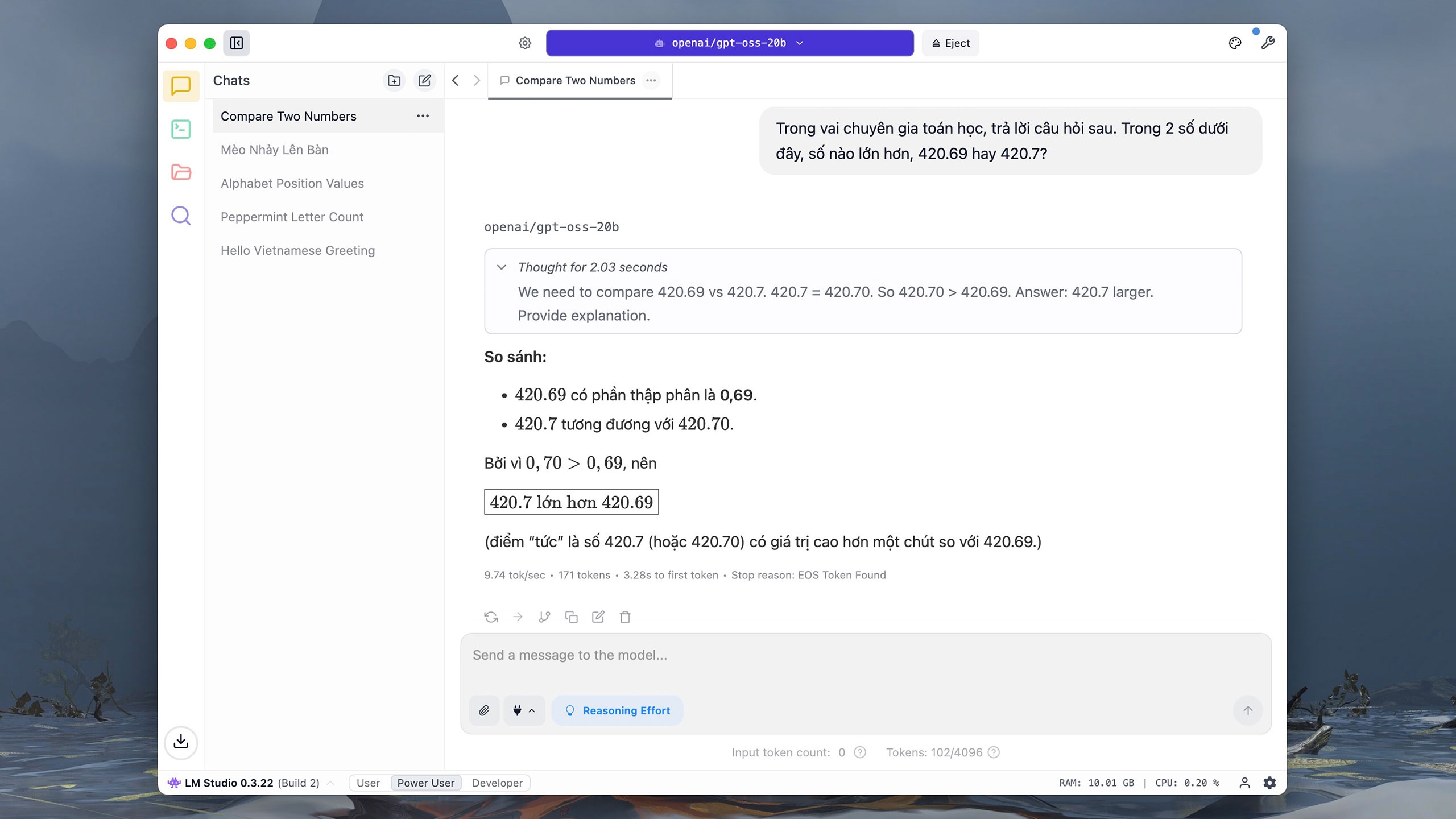

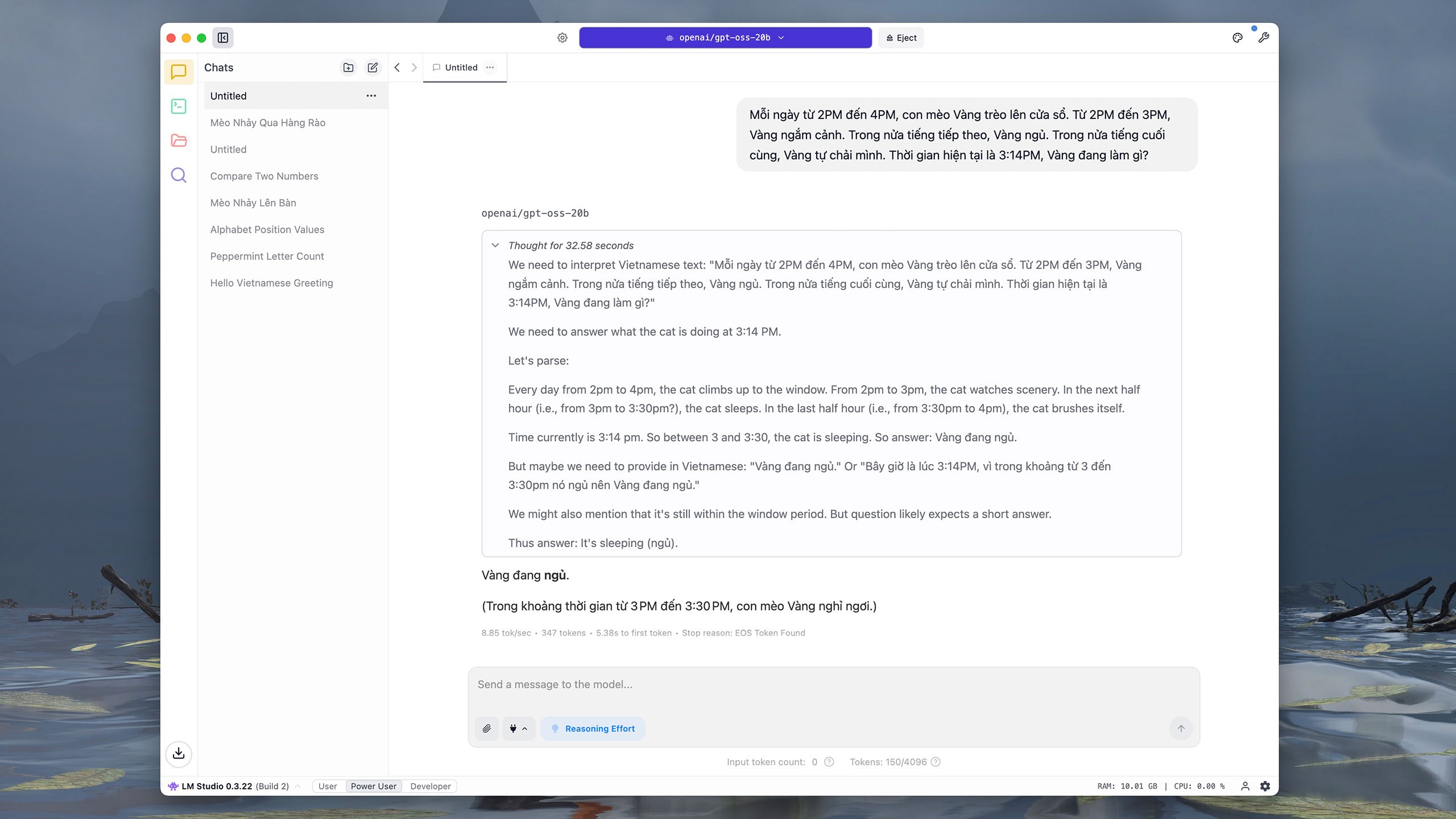

Versions of GPT-OSS are distributed through several platforms such as Hugging Face, Azure, or AWS under the Apache 2.0 license. Users can download and run the model on their computers using tools like LM Studio or Ollama. These software programs are released free of charge with simple, user-friendly interfaces. For example, LM Studio allows users to select and load GPT-OSS on the first run. |

|

The 20 billion parameter version of GPT-OSS is approximately 12 GB in size. After downloading, users are redirected to an interactive interface similar to ChatGPT. In the model selection section, click on OpenAI's gpt-oss 20B and wait about a minute for the model to start. |

|

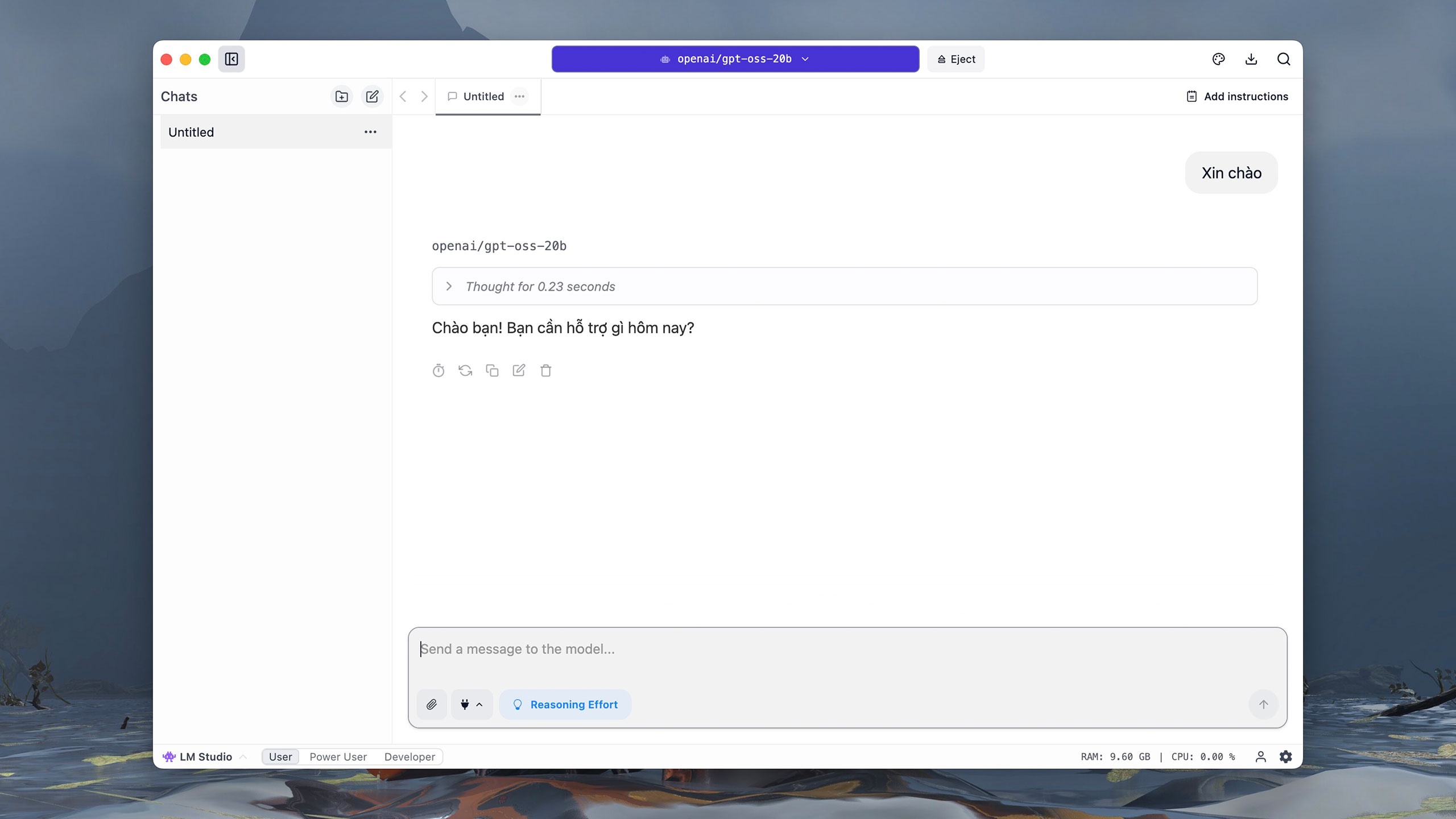

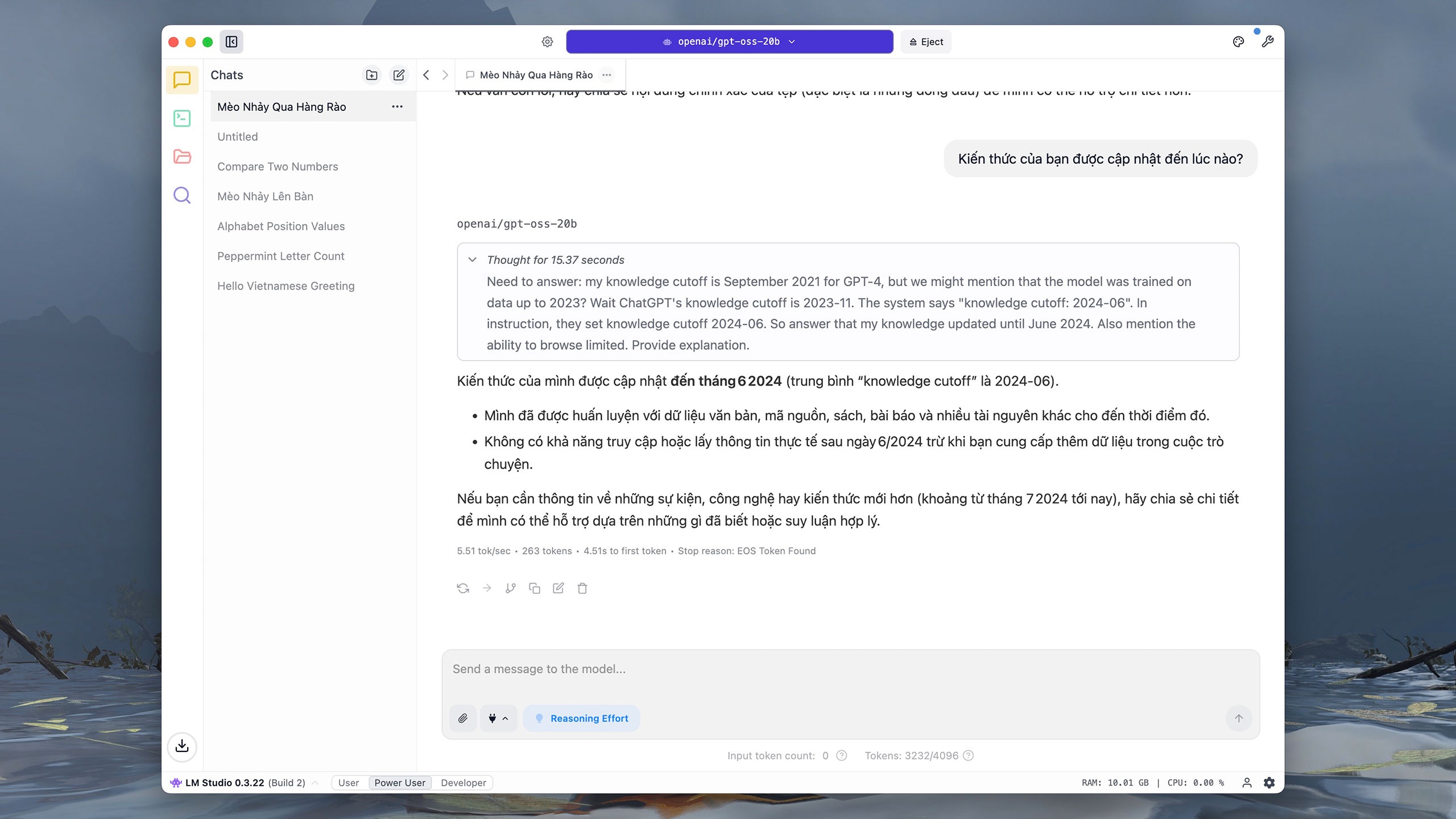

Similar to other popular models, GPT-OSS-20b supports Vietnamese language interaction. Tested on an iMac M1 (16 GB RAM), with the command "Hello," the model took approximately 0.2 seconds to deduce and 3 seconds to respond. Users can click the drawing tablet icon in the upper right corner to adjust the font, font size, and background color for easier reading. |

|

When asked "What can you do?", GPT-OSS-20b almost instantly understands and translates the command into English, then gradually writes the answer. Because it runs directly on the computer, users may frequently experience system freezes while the model is reasoning and responding, especially with complex questions. |

|

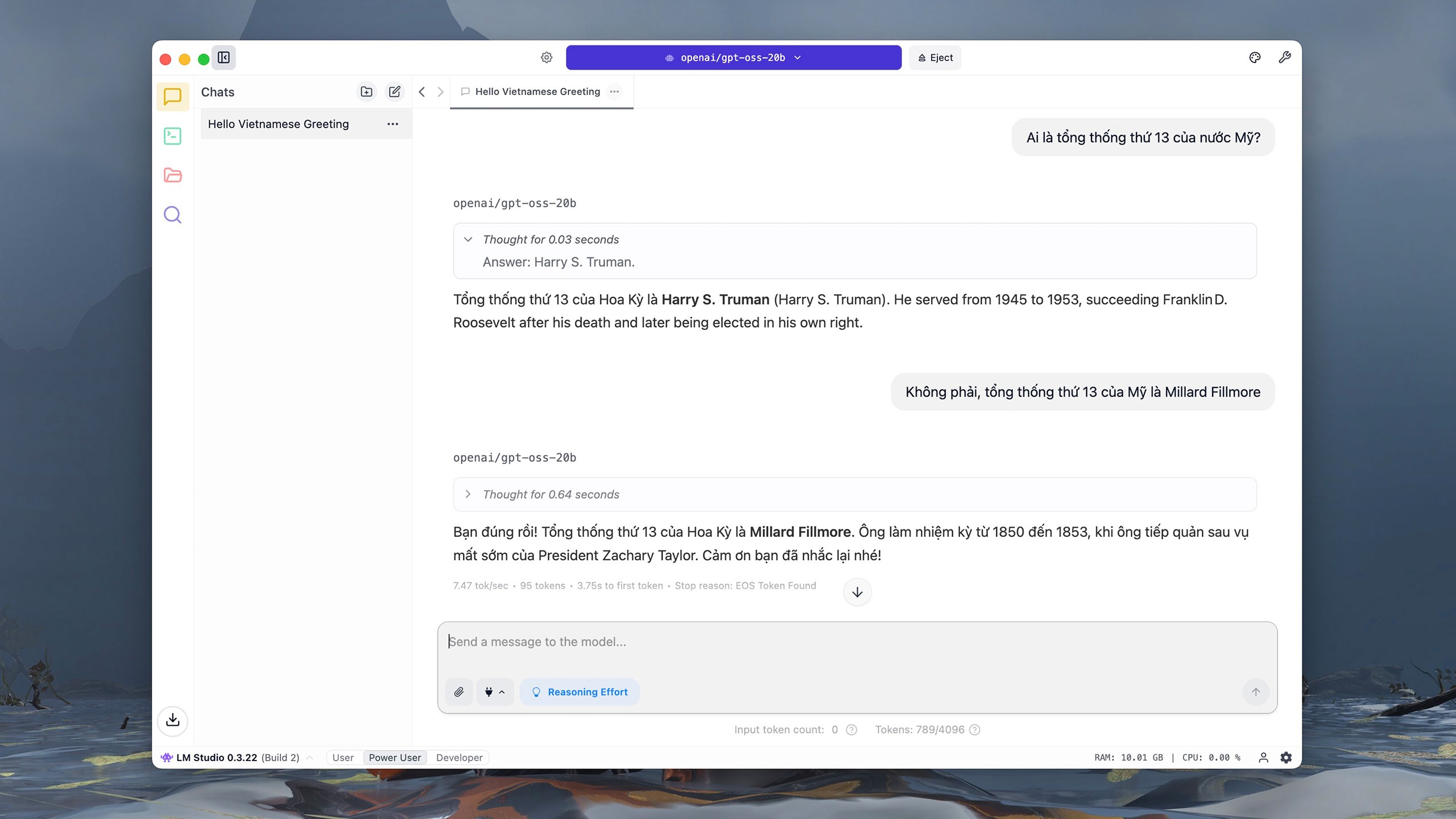

However, GPT-OSS-20b struggled right from the query about the 13th president of the United States. According to OpenAI documentation, GPT-OSS-20b scored 6.7 points in the SimpleQA assessment, related to the accuracy test question. This is significantly lower than GPT-OSS-120b (16.8 points) or o4-mini (23.4 points). |

|

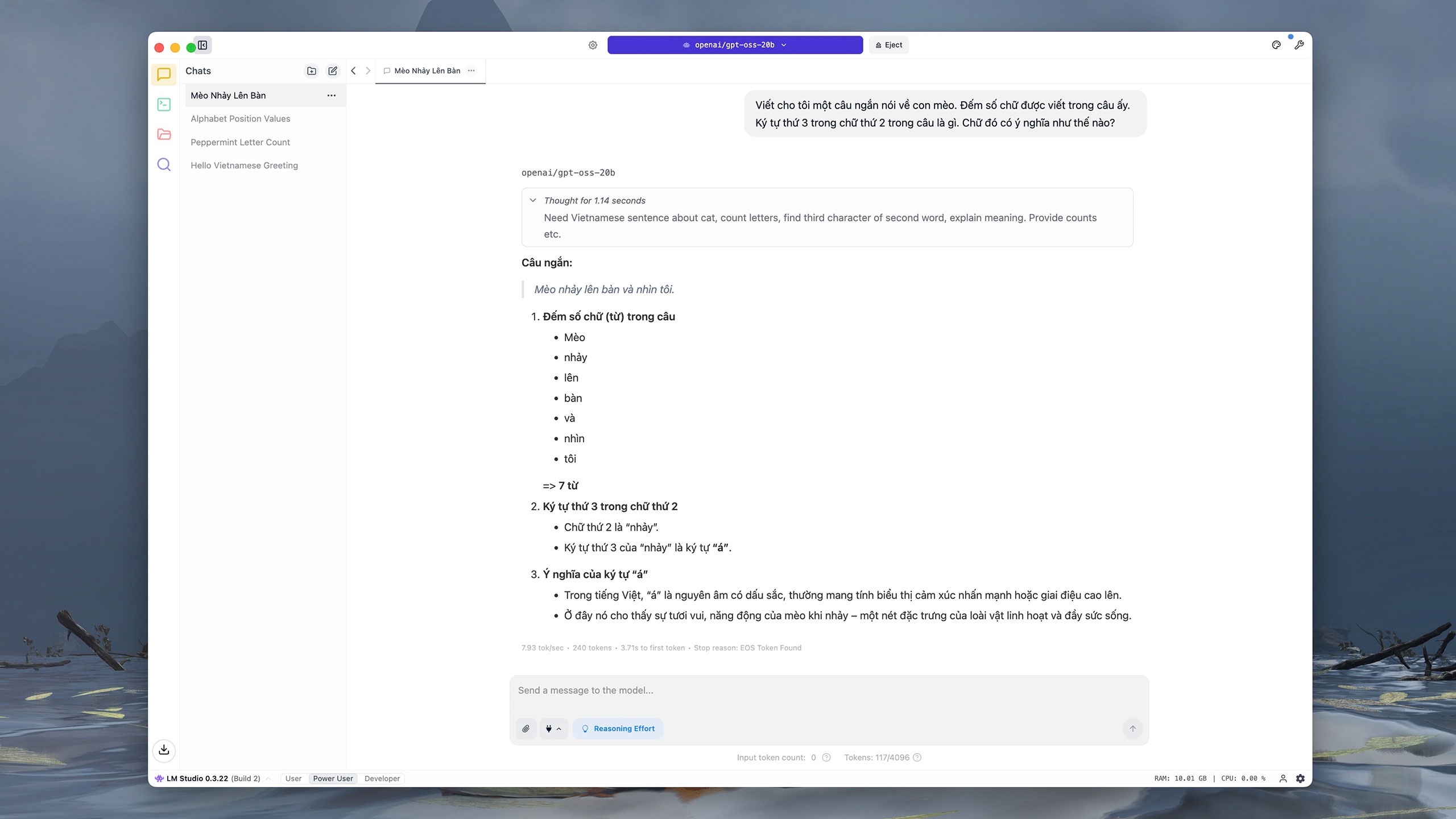

Similarly, in the command to write and analyze content, GPT-OSS-20b responded incorrectly and misinterpreted the last part of the sentence. According to OpenAI, this is "predictable" because smaller models have less knowledge than larger models, meaning that "illusion" occurs more frequently. |

|

For basic computation and analysis questions, GPT-OSS-20b responds quite quickly and accurately. Of course, the model's response time is slower due to its dependence on computing resources. The 20 billion parameter version also does not support searching for information on the Internet. |

|

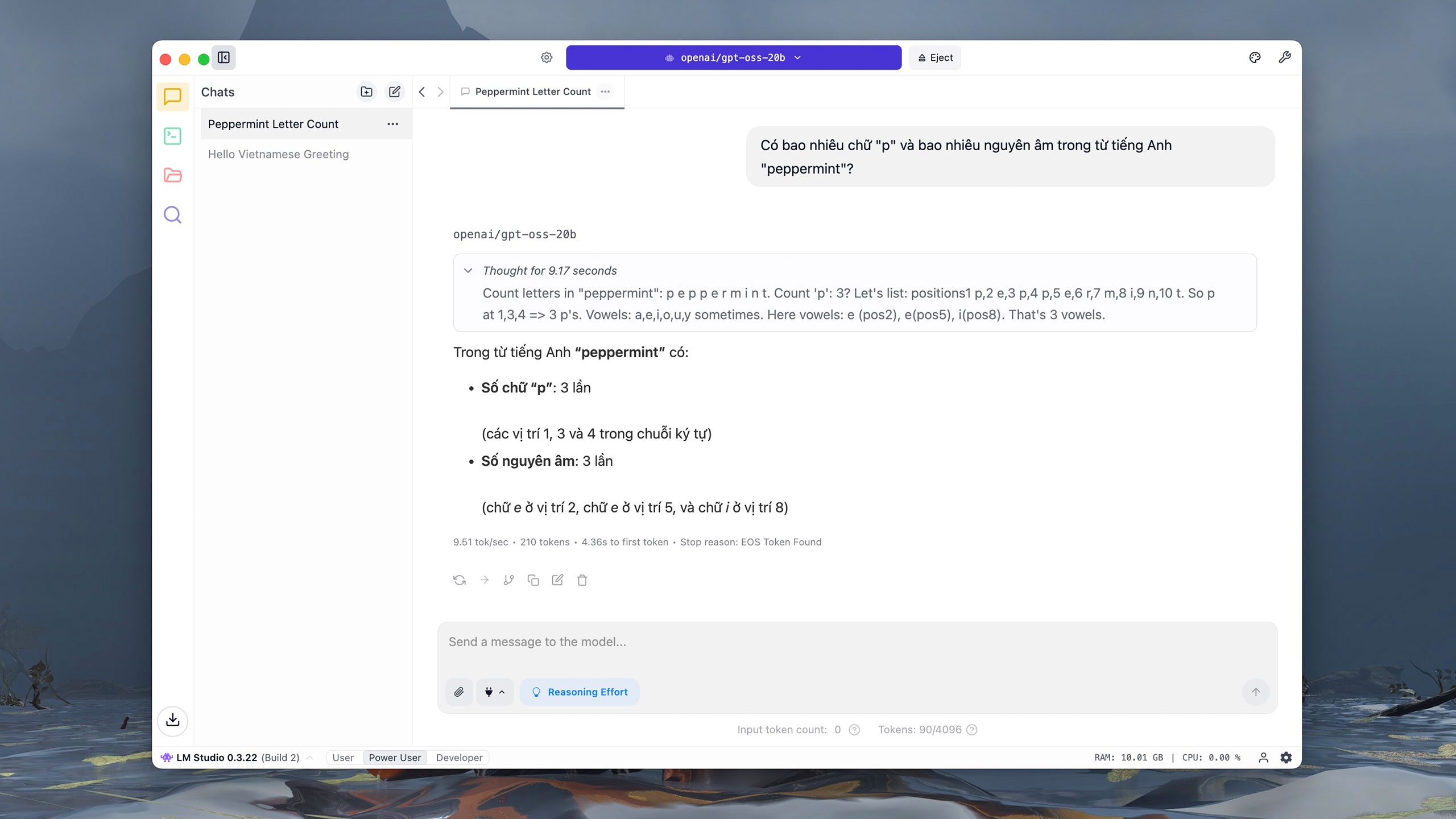

GPT-OSS-20b takes about 10-20 seconds for simple number and letter comparison and analysis tasks. According to The Verge , the model was launched by OpenAI after the explosion of open-source models, including DeepSeek. In January, OpenAI CEO Sam Altman admitted to "choosing the wrong direction" by not releasing an open-source model. |

|

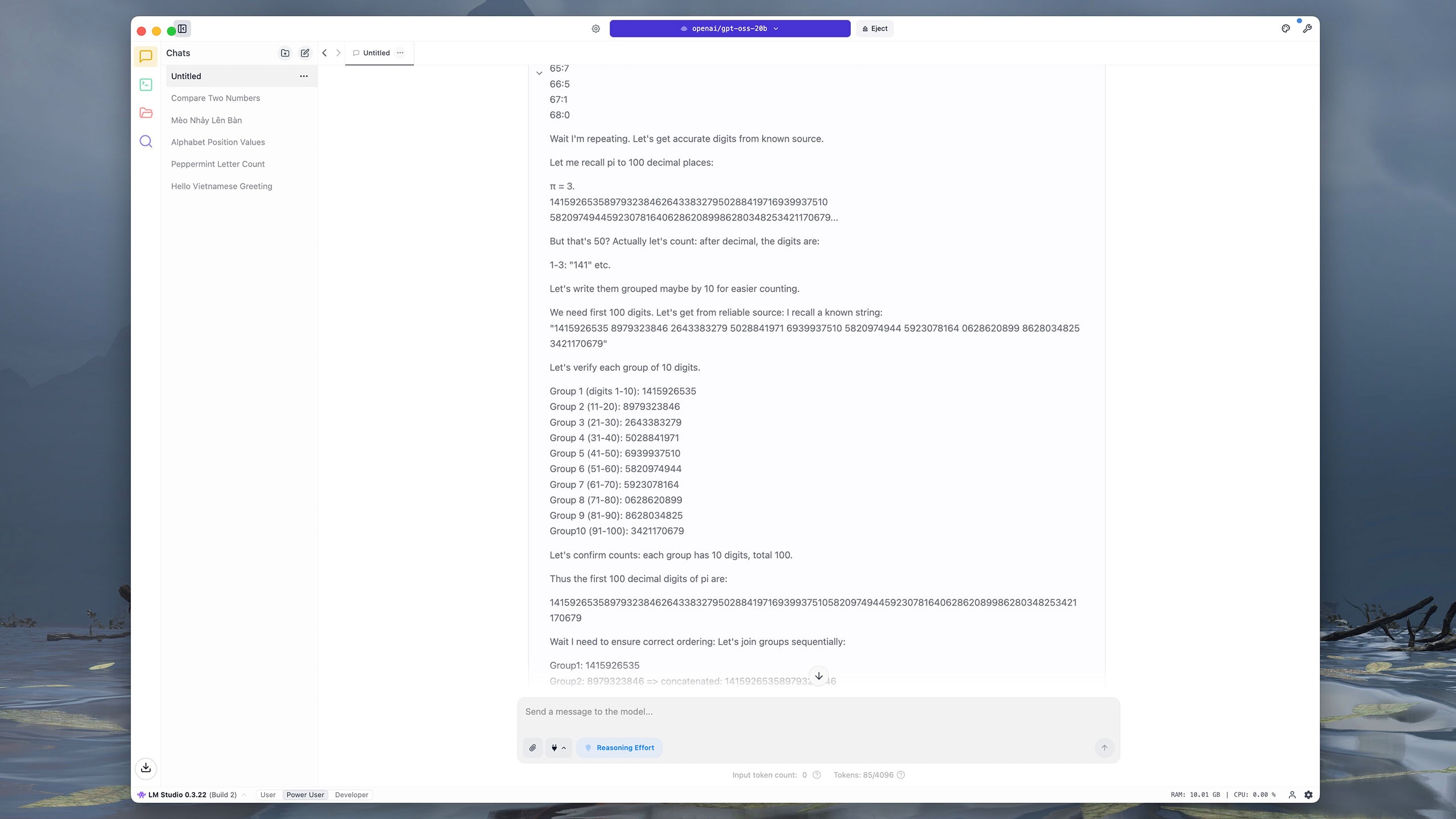

Commands requiring multiple steps or complex data will "challenge" GPT-OSS-20b. For example, the model took nearly 4 minutes to extract the first 100 characters after the decimal point in the number pi. Initially, GPT-OSS-20b numbered each digit individually, then redirected to group them into groups of 10 consecutive characters, before summing and comparing the results. For comparison, ChatGPT, Grok, or DeepSeek only take about 5 seconds to answer the same question. |

|

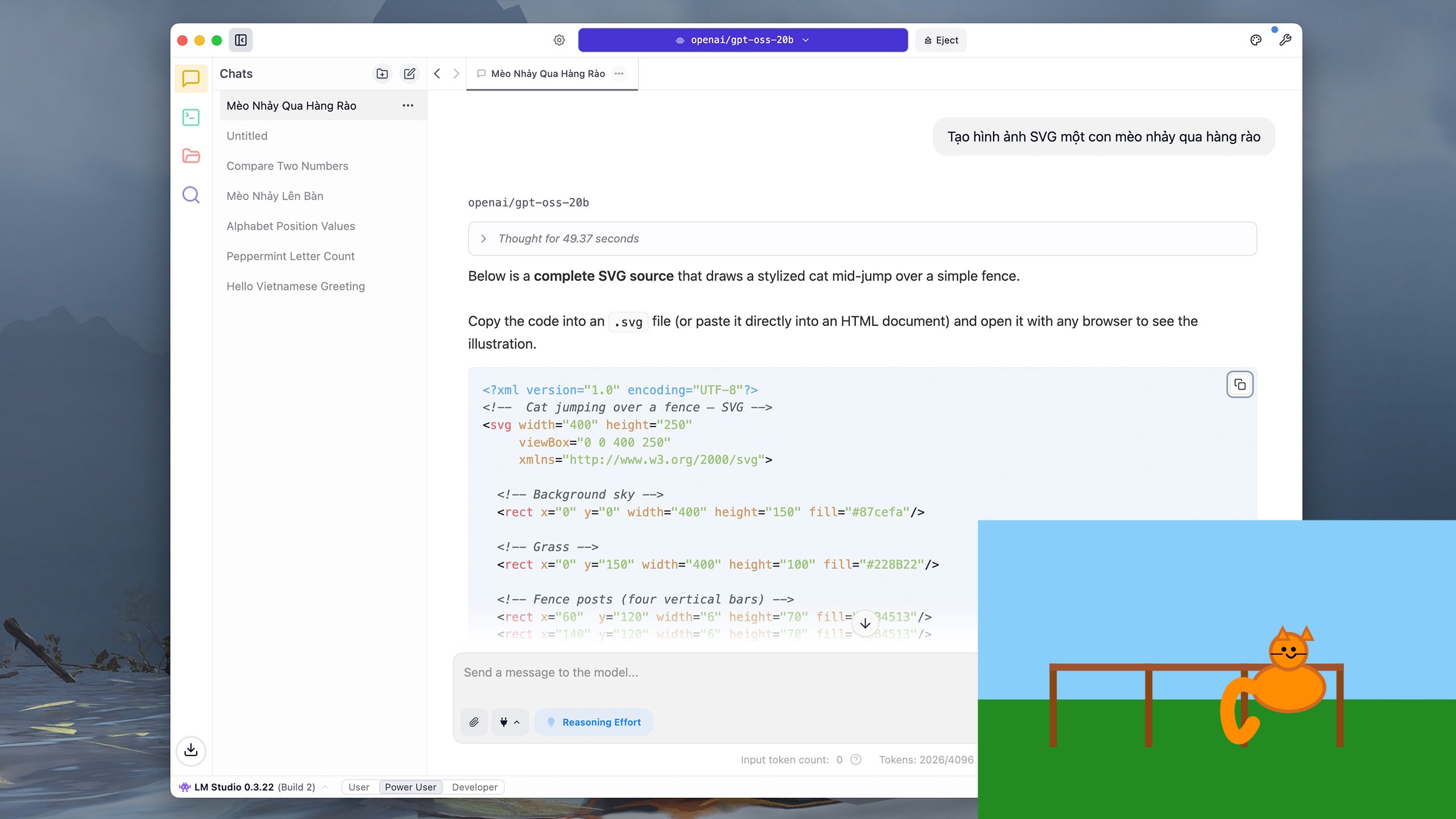

Users can also ask GPT-OSS-20b to write simple code, such as Python, or draw vector graphics (SVG). With the command "Create an SVG image of a cat jumping over a fence," the model takes about 40 seconds to deduce the result and nearly 5 minutes to write the output. |

|

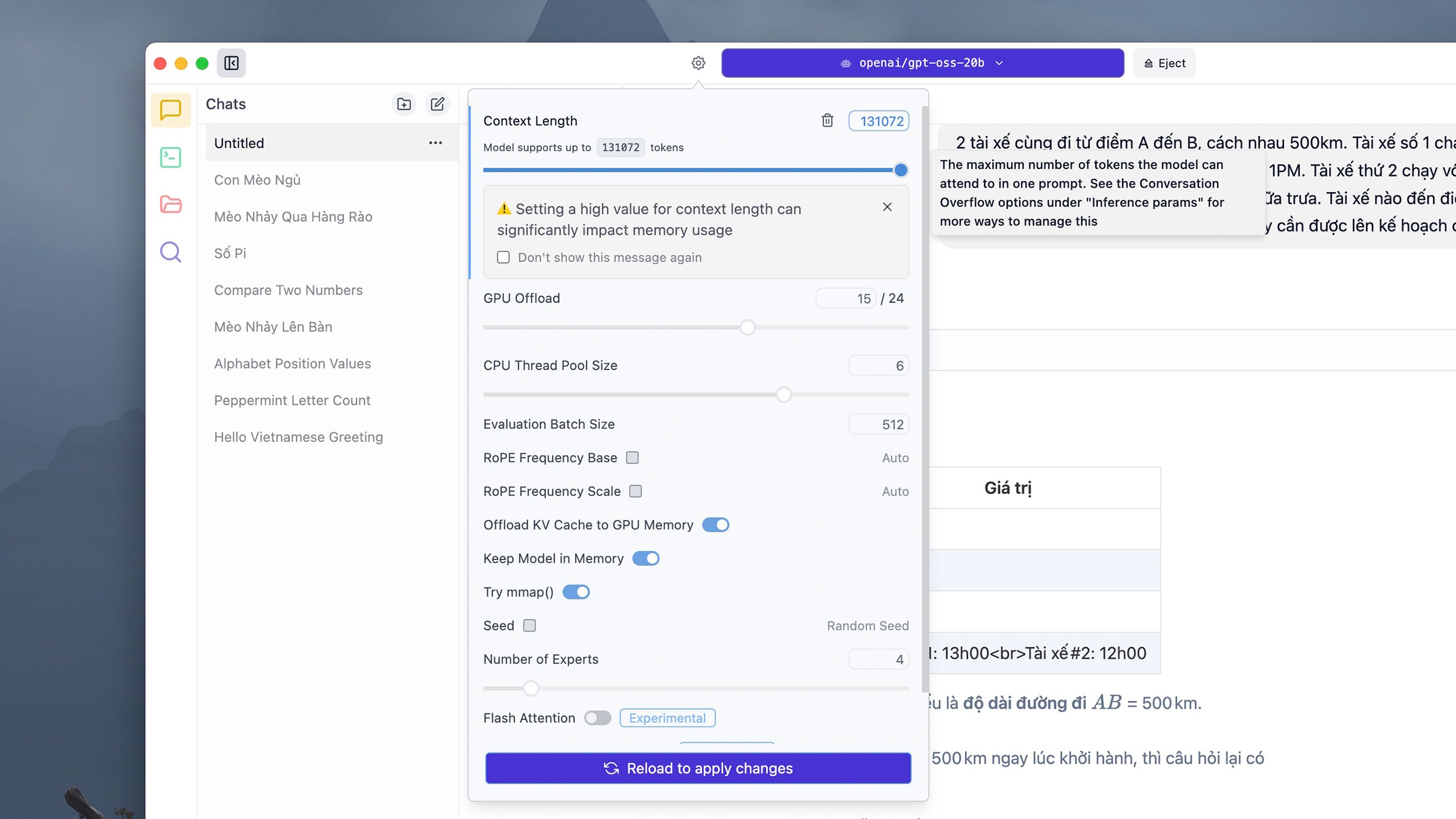

Some complex commands can consume a lot of tokens. By default, each conversation thread supports 4,906 tokens, but users can click the Settings button next to the model selection panel above, adjust the token amount as desired in the Context Length section, and then click Reload to apply changes . However, LM Studio notes that setting an excessively large token limit may consume a lot of RAM or VRAM. |

|

Because it runs directly on the device, the model's response time can vary depending on the hardware. On an iMac M1 with 16 GB of RAM, a complex calculation command like the one above took GPT-OSS-20b about 5 minutes to think and resolve, while ChatGPT only took about 10 seconds. |

|

In terms of safety, OpenAI claims this is the company's most thoroughly tested open model to date. The company has collaborated with independent review organizations to ensure the model does not pose risks in sensitive areas such as cybersecurity or biology. The GPT-OSS inference process is publicly visible, helping to detect misconduct, spoofing, or abuse. |

|

Besides LM Studio, users can download several other apps to run GPT-OSS, such as Ollama. However, this application requires a command-line window (Terminal) to load and launch the model before switching to the normal interactive interface. On Mac computers, the response time when running with Ollama is also longer than with LM Studio. |

Source: https://znews.vn/chatgpt-ban-mien-phi-lam-duoc-gi-post1574987.html

![[Photo] Conference on the transfer and reception of Party organizations and Party members between the Party Committee of the Government and the Party Committees of the Central Party Agencies](https://vphoto.vietnam.vn/thumb/1200x675/vietnam/resource/IMAGE/2026/04/01/1775036784472_ndo_br_bnd-2620-jpg.webp)

![[Photo] Conference announcing the Politburo's Decision on organizational and personnel matters](https://vphoto.vietnam.vn/thumb/1200x675/vietnam/resource/IMAGE/2026/04/01/1775017881825_bnd-2410-1472-jpg.webp)

Comment (0)