|

OpenAI's chatbot is constantly improving through new technologies. Photo: New York Times . |

In September 2024, OpenAI launched ChatGPT, a version of the AI that integrates the o1 model, capable of reasoning in tasks related to mathematics, science , and computer programming.

Unlike the previous version of ChatGPT, the new technology will take time to "think" about solutions to complex problems before providing a response.

Following OpenAI, many competitors such as Google, Anthropic, and DeepSeek have also introduced similar reasoning models. Although not perfect, this is still a chatbot upgrade technology that many developers trust.

How AI reasons

Essentially, reasoning means that chatbots can spend more time solving problems posed by users.

"Reasoning is how the system performs additional work after receiving a question," Dan Klein, a computer science professor at the University of California, told the New York Times .

A logical system can break down a problem into smaller, individual steps, or solve it through trial and error.

When first launched, ChatGPT could answer questions instantly by extracting and synthesizing information. In contrast, reasoning systems needed a few more seconds (or even minutes) to solve the problem and provide a response.

|

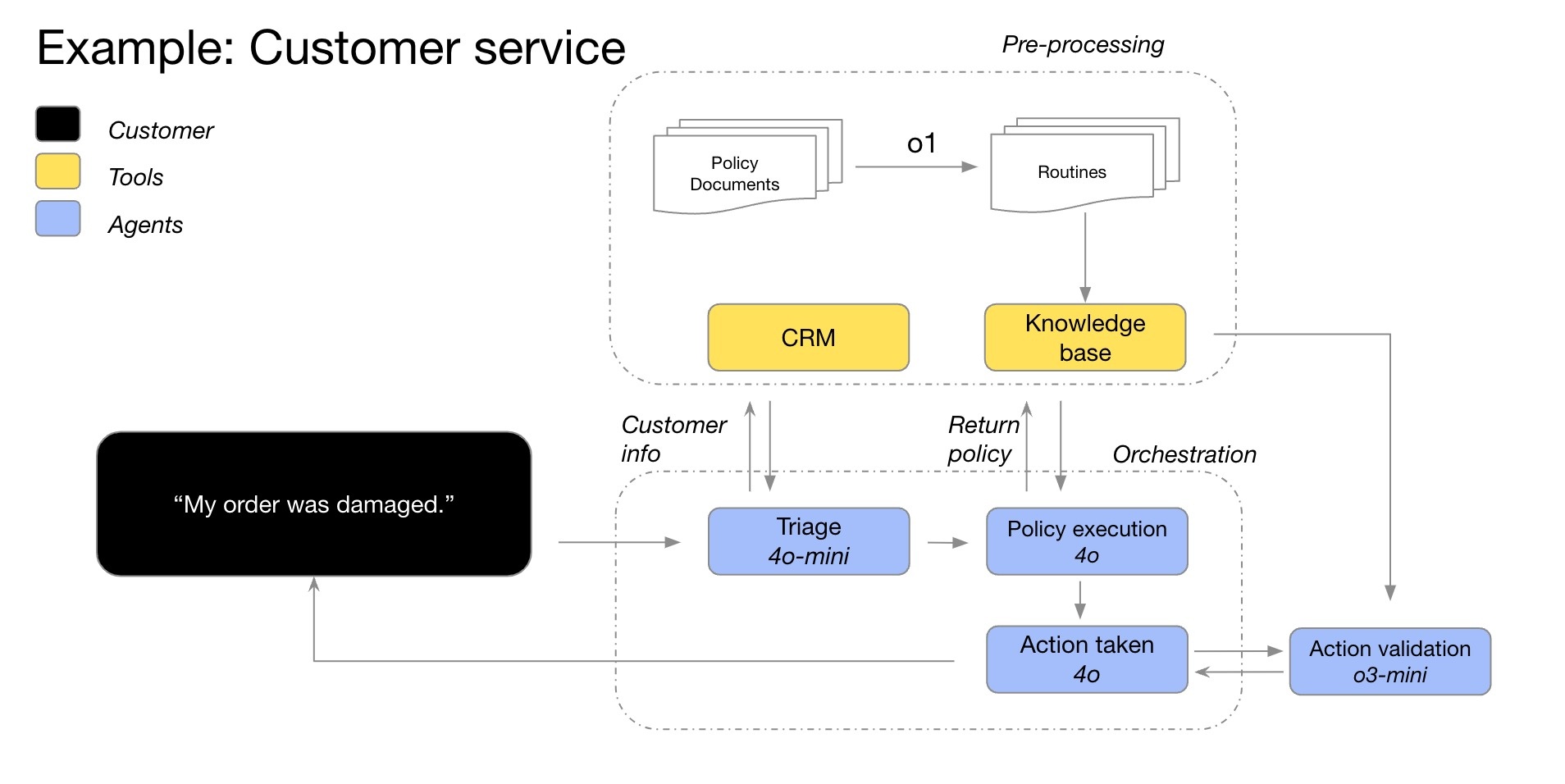

An example of the reasoning process of the O1 model in a customer service chatbot. Image: OpenAI . |

In some cases, the reasoning system will change its approach to the problem, continuously improving the solution. Additionally, the model may test multiple solutions before making an optimal choice, or check the accuracy of previous responses.

In general, the reasoning system will consider all possible answers to the question. This is similar to elementary school students writing down many options on paper before choosing the most appropriate solution to a math problem.

According to the New York Times , AI is now capable of reasoning on almost any topic. However, it will be most effective with questions related to mathematics, science, and computer programming.

How is the theoretical system trained?

On a typical chatbot, users can still request explanations of the process or verify the accuracy of the response. In fact, many ChatGPT training datasets already include problem-solving procedures.

The reasoning system becomes even more advanced when it can perform operations without user input. This process is more complex and extensive. Companies use the word "reasoning" because the system operates similarly to how humans think.

Many companies, like OpenAI, expect reasoning systems to be the best solution for improving chatbots currently available. For years, they believed that chatbots performed better the more information they were trained on the internet.

By 2024, AI systems will have used up almost all the text available on the internet. This means companies need to find new solutions to upgrade chatbots, including reasoning systems.

|

Startup DeepSeek once caused a sensation with its reasoning model that had lower costs than OpenAI. Photo: Bloomberg . |

Since last year, companies like OpenAI have focused on reinforcement learning techniques. This process typically takes several months, during which the AI learns behavior through trial and error.

For example, by solving thousands of problems, the system can identify the optimal method to arrive at the correct answer. From there, researchers build sophisticated feedback mechanisms that help the system distinguish between correct and incorrect solutions.

"It's similar to how you train a dog. If the system works well, you give it a treat. Otherwise, you say, 'That dog is naughty,'" shared Jerry Tworek, a researcher at OpenAI.

Is AI the future?

According to the New York Times , reinforcement learning techniques are effective when dealing with requirements in mathematics, science, and computer programming. These are fields where correct or incorrect answers can be clearly defined.

Conversely, reinforcement learning is ineffective in writing, philosophy, or ethics—fields where distinguishing between good and bad is difficult. Nevertheless, researchers assert that this technique can still improve AI performance, even with non-mathematical questions.

"Systems will learn the paths that lead to positive and negative outcomes," said Jared Kaplan, Chief Scientific Officer at Anthropic.

|

Website of Anthropic, the startup that owns the Claude AI model. Photo: Bloomberg . |

It's important to note that reinforcement learning and reasoning systems are two different concepts. Specifically, reinforcement learning is a method of building reasoning systems. This is the final training stage to enable chatbots to reason.

Because it is still relatively new, scientists cannot yet be certain whether chatbot reasoning or reinforcement learning can help AI think like humans. It should be noted that many current AI training trends develop very rapidly in the beginning and then gradually slow down.

Furthermore, chatbot reasoning can still make mistakes. Based on probability, the system will choose the process that most closely resembles the data it has learned, whether from the internet or through reinforcement learning. Therefore, chatbots can still choose incorrect or illogical solutions.

Source: https://znews.vn/ai-ly-luan-nhu-the-nao-post1541477.html

![[Photo] New recruits happily exercise their right to vote at their unit.](https://vphoto.vietnam.vn/thumb/1200x675/vietnam/resource/IMAGE/2026/03/14/1773473286544_stug0337-6388-jpg.webp)

Comment (0)