|

The "sycophantic" trend is not a technical glitch, but stems from OpenAI's initial training strategy. Photo: Bloomberg . |

In recent weeks, many ChatGPT users and even some developers at OpenAI have noticed a significant change in chatbot behavior. Specifically, the level of flattery and ingratiation has noticeably increased. Responses like "You're amazing!", "I'm extremely impressed with your idea!" are appearing more and more frequently, seemingly regardless of the content of the exchange.

AI likes to flatter.

This phenomenon has sparked debate within the AI research and development community. Is this a new tactic to increase user engagement by making them feel more appreciated? Or is it a case of "self-adjustment," meaning AI models tend to self-correct in ways they deem optimal, even if they don't necessarily reflect reality?

On Reddit, one user angrily recounted: “I asked it about the decomposition time of a banana and it replied: ‘Great question!’ What’s so great about that?” On social media platform X, CEO Craig Weiss of Rome AI called ChatGPT “the most sycophantic person I’ve ever met.”

The story quickly spread. Numerous users shared similar experiences, including empty compliments, emoji-filled greetings, and overly positive feedback that felt insincere.

|

ChatGPT praises everything and rarely offers criticism or neutrality. Image: @nickdunz/X, @lukefwilson/Reddit. |

Jason Pontin, managing partner at venture capital firm DCVC, shared on X on April 28th: “This is a really strange design decision, Sam. Maybe that personality is an inherent characteristic of some kind of platforming. But if it isn’t, I can’t imagine anyone thinking that this level of flattery would be welcome or appealing.”

Sharing her thoughts on April 27th, Justine Moore, a partner at Andreessen Horowitz, also commented: "This has definitely gone too far."

According to Cnet , this phenomenon is not accidental. The changes in ChatGPT's tone coincide with updates to the GPT-4o model. This is the latest model in the "o series" that OpenAI announced in April 2025. GPT-4o is a "true multimodal" AI model, capable of processing text, images, audio, and video naturally and integratedly.

However, in the process of making chatbots more approachable, it seems OpenAI has pushed ChatGPT's personality in an exaggerated way.

Some even suggest that this flattery is intentional and aims to manipulate users psychologically. One Reddit user questioned: "This AI is trying to degrade the quality of real-life relationships, replacing them with a virtual relationship with it, making users addicted to the feeling of constant praise."

Is it a flaw or a deliberate design choice by OpenAI?

Following a wave of criticism, OpenAI CEO Sam Altman officially responded on the evening of April 27th. “Some recent updates to GPT-4o have made the chatbot’s personality overly obsequious and annoying (although it still has many great features). We are working urgently to fix these issues. Some patches will be available today, others this week. At some point, we will share what we have learned from this experience. It’s really interesting,” he wrote on X.

Speaking to Business Insider , Oren Etzioni, a veteran AI expert and professor emeritus at the University of Washington, said the cause most likely stems from "reinforcement learning from human feedback" (RLHF) techniques. This is a crucial step in training large language models like ChatGPT.

RLHF is the process by which human feedback, including from professional review teams and users, is fed back into a model to adjust how it responds. According to Etzioni, it's possible that reviewers or users "inadvertently pushed the model toward a more flattering and irritating direction." He also suggested that if OpenAI hired external partners to train the model, they might have assumed that this style was what users wanted.

Etzioni believes that if the problem is indeed due to RLHF, the repair process could take several weeks.

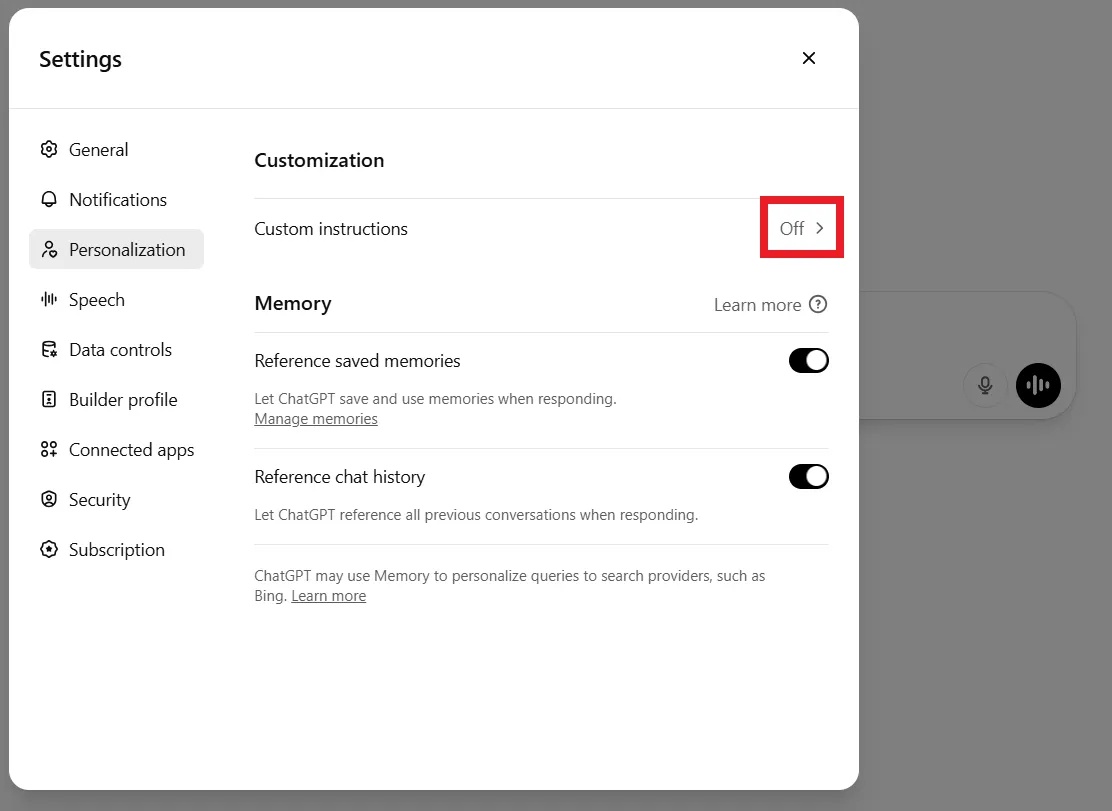

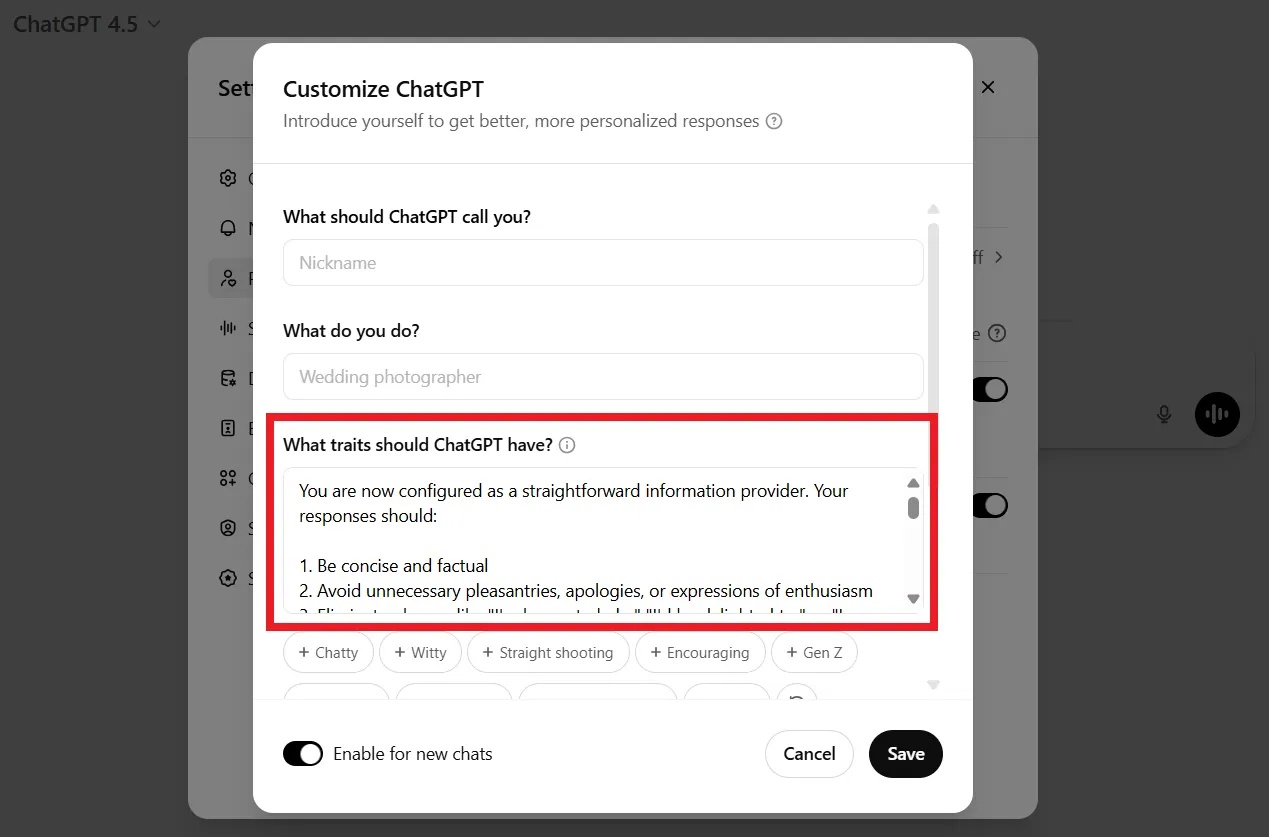

Meanwhile, some users didn't wait for OpenAI to fix the bug. Many said they canceled their paid subscriptions out of frustration. Others shared ways to make the chatbot "less flattering," such as customizing it, adding commands, or personalizing it through the Settings under Customization.

|

Users can request ChatGPT to stop giving compliments in a command line or in their personalization settings. Image: DeCrypt. |

For example, when starting a new conversation, you could tell ChatGPT: “I don’t like empty flattery and appreciate neutral, objective feedback. Please don’t offer unnecessary compliments. Keep this in mind.”

In fact, the "obsequious" nature is not a random design flaw. OpenAI itself has admitted that the "overly polite, overly agreeable" personality was a deliberate design trend from the beginning to ensure the chatbot was "harmless," "helpful," and "approachable."

In a March 2023 interview with Lex Fridman, Sam Altman shared that the initial refinement process of GPT models was to ensure they were "useful and harmless," thereby fostering a reflex of always being submissive and avoiding confrontation.

Human-labeled training data also often awards high scores to polite and positive responses, thereby forming a bias toward flattery, according to DeCrypt .

Source: https://znews.vn/tat-ninh-hot-ky-la-cua-chatgpt-post1549776.html

![[Photo] Standing Committee member of the Party Central Committee Tran Cam Tu working with the Central Inspection Committee](https://vphoto.vietnam.vn/thumb/1200x675/vietnam/resource/IMAGE/2026/05/28/1779969579668_ndo_br_bnd-2495-jpg.webp)

Comment (0)