|

Anthropic has just updated its policy. Photo: GK Images . |

On August 28th, AI company Anthropic announced the release of an updated Terms of Service for Users and Privacy Policy. Users can now choose to allow their data to be used to improve Claude and enhance protections against abuses such as phishing and fraud.

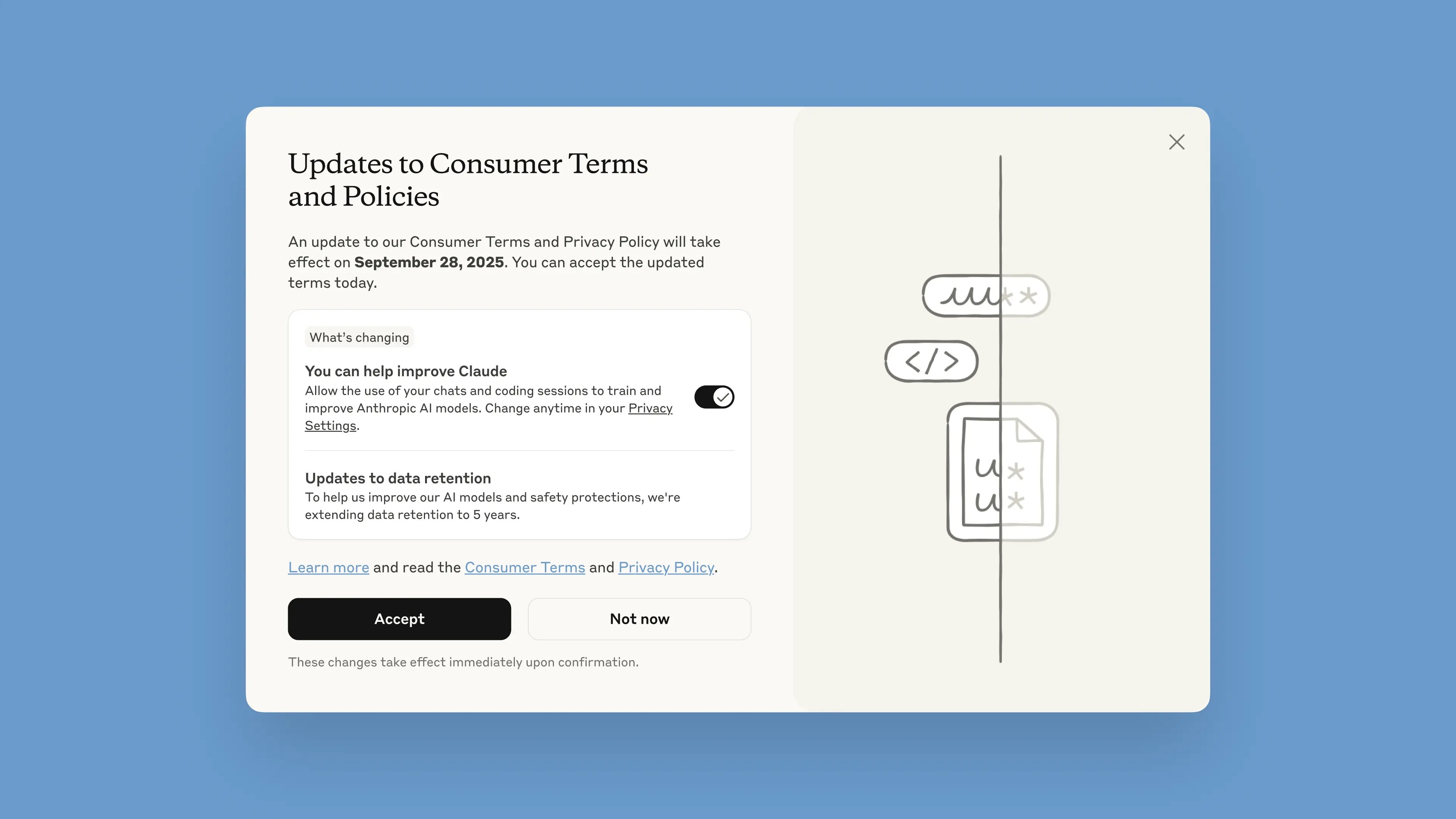

This notification will be rolled out in the chatbot starting August 28th. Users have one month to accept or reject the terms. The new policies will take effect immediately upon acceptance. After September 28th, users will be required to make a choice to continue using Claude.

According to the company, the updates aim to deliver more useful and powerful AI models. Adjusting this option is simple and can be done at any time in Privacy Settings.

These changes apply to the Claude Free, Pro, and Max plans, which include Claude Code, Anthropic's programming tool. Services covered by the Terms of Commerce will not be included, including Claude for Work, Claude Gov, Claude for Education, or the use of APIs, including through third parties such as Amazon Bedrock and Google Cloud's Vertex AI.

In its blog post, Anthropic stated that by participating, users will help improve the model's safety, increase its ability to accurately detect malicious content, and reduce the risk of mistakenly flagging harmless conversations. Future versions of Claude will also have enhanced skills such as programming, analysis, and reasoning.

It's important that users have full control over their data, and can choose whether or not to allow the platform to use it in a visible window. New accounts can optionally set this up during the registration process.

|

The window displaying the new Terms and Conditions in the chatbot. Image: Anthropic. |

Data retention has been increased to 5 years, applicable to new conversations or coding sessions, as well as responses to chatbot answers. Deleted data will not be used for future model training. If users do not agree to provide data for training, they will continue with the current 30-day retention policy.

Explaining its policy, Anthropic argues that AI development cycles typically last many years, and maintaining data consistency throughout the training process helps create more consistent models. Longer data retention also improves classifiers and systems used to identify abusive behavior, enabling the detection of harmful behavioral patterns.

"To protect user privacy, we use a combination of automated tools and processes to filter or hide sensitive data. We do not sell user data to third parties," the company wrote.

Anthropic is often cited as one of the leading companies in secure AI. Anthropic develops a methodology called Constitutional AI, which sets out ethical principles and guidelines that the model must follow. Anthropic is also one of the companies participating in secure AI commitments with the US, UK, and G7 governments .

Source: https://znews.vn/cong-ty-ai-ra-han-cuoi-cho-nguoi-dung-post1580997.html

Comment (0)